During the Management course of, the take a look at information will get related to a specific test, which may feed into an automation device that confirms that the data is given within the expected format at whatever level is required. When it involves managing your software improvement lifecycle (SDLC), one of the most difficult elements is effectively managing and deploying information. Building “data-ready” take a look at environments can effectively support your engineering practices across the event and check lifecycle — a discipline better known as test data administration (TDM). In this Refcard, explore key TDM patterns and anti-patterns on your data manufacturing facility. Ensure that their resolution adheres to related regulatory requirements and consists of sturdy security measures to guard sensitive information.

Out-of-box Support For In Style Purposes

Data literacy will contribute to SDLC supply activities corresponding to development, testing, and help duties like data synthetics. Identifying knowledge necessities after the environments are provisioned will invariably lead to lengthy delays and inhibit broader Dev, Test, and QA endeavors. For each consumer story, be sure that your knowledge needs (tasks) are captured and articulated effectively. After buying the required take a look at data, generating lacking information, and masking it as required, it’s time to maneuver it to the target take a look at environments.

What Are The Common Methods Used With Take A Look At Knowledge Management?

The sales group consultant will then compile and forward all pertinent info to the Fulfillment and Provisioning Team to enable Test Data Management for the required Build Place. Added and approved Test Data are solely availabe within the Quality Assurance(QA) Environments and Development(Dev) Environments. And these envrionments is not going to get provisioned with the default R&D primary information. Test Data Management characteristic consists of 4 primary processes as talked about under.

What’s Test Data Management? Significance & Best Practices

Preventing this state of affairs requires rigorous testing, including testing of edge cases. Thus, knowledge units utilized in testing should be thorough and adequately represent production data. When this process isn’t operating optimally, corporations can expect extra frequent delays, higher prices, and reduced project administration and planning effectivity. However, getting test knowledge management proper can enhance productivity, empower staff, and enhance the quantity of on-time and on-budget project completions. While there’s a lot to find out about test knowledge management, this information is designed as a place to begin for companies looking to master the method.

The generation of enough take a look at data is one of the bigger challenges that drive this trade-off. With good TDM, DevOps and evaluation groups can pace up the process without sacrificing high quality. TDM ensures you at all times have high-quality data for testing functions — which ends up in more reliable software program and extra profitable and cost-effective deployments. Just enter the variety of people in your growth and testing teams along with inputs for take a look at environments, defects, and supply delays.

DevOps groups typically can’t meet ticket requests due to the time and effort they require to formulate take a look at data. This can negatively influence testing quality with the resulting expensive, late-stage issues. The TDM resolution should give consideration to lowering the time required to refresh the setting, which makes the latest model of the check knowledge extra accessible. Otherwise – even with check automation processes – testing can be complex, error-prone, and should embrace unprotected delicate knowledge. This course of, with TDM enjoying a central function, ensures that testing environments are provisioned with the wanted compliant take a look at knowledge, in a timely manner. Test information automation is the method of automatically delivering check information to decrease environments, as requested by software and quality engineering teams.

If you alter “Simon” to “John” right here, then make positive you change “Simon” to “John” there. Inconsistent masking within an utility or throughout applications is more probably to cause serious data integrity issues. Data masking is a data security method by which a dataset is copied however the PII and/or delicate knowledge has been obfuscated. Sub-setting needs to be “precise;” otherwise, the method will end in “required” information going lacking and/or relationships being lost.

Explore the preferred and greatest types of BDD testing instruments out there for builders across completely different programming languages and growth platforms. Establishing a review and auditing process ensures that the take a look at knowledge is accurate, dependable, and complies with data privacy rules. Regular evaluations by designated stakeholders, along with inside audits, help establish any anomalies or data quality points. When firms change methods or transfer data to a more secure location, they typically must carry out an information migration. If a company desires to use cloud-based options, it must transfer present data from localized hardware to a cloud setting.

Don’t assume that “synthetic data” is universally appropriate for all take a look at phases. The value of “fake data” will usually diminish later in the lifecycle as acceptance and cross-platform integration protection increases. This entails constructing “data-ready” check environments that may successfully support your engineering practices across the event and take a look at lifecycle — a discipline better generally recognized as test information administration (TDM). For the configuration of take a look at data, users are suggested to place an order for an Initial Quality Assurance (QA) environment.

When a TDM is used the identical repository is utilized by all of the groups and hence the cupboard space is utilized diligently. We will use the production information after masking or hiding the sensitive information. This masking comes beneath TDM (Test Data Management), the place we intend to maintain the sensitive production data separate from the test information. Some firms will develop and launch open-source instruments in-house and will offer help as an add-on, but these plans may be costly, essentially requiring you to pay for improvement via assist fees. The determination to forgo support or pay for these add-ons is decided by your particular needs and danger tolerance. With pseudonymization, delicate knowledge is changed but in such a way that aspects of the information nonetheless remain.

- The value of “fake data” will normally diminish later in the lifecycle as acceptance and cross-platform integration coverage increases.

- The tool additionally enhances data security through masking whereas sustaining referential integrity.

- It consists of the planning, group, coordination, and control of testing activities for a software program growth project.

- Building “data-ready” test environments can successfully assist your engineering practices across the development and check lifecycle — a discipline better often identified as check data management (TDM).

By implementing a sturdy TDM resolution, organizations can significantly improve their testing efforts, scale back dangers, and achieve better business outcomes. Whether you’re operating in monetary companies, healthcare, or some other industry, investing in the best instruments and applied sciences for test data management can result in substantial advantages. The fifth step to a compliant test information administration technique is to allow for synthetic data technology when check teams can’t extract a enough volume of test data from production.

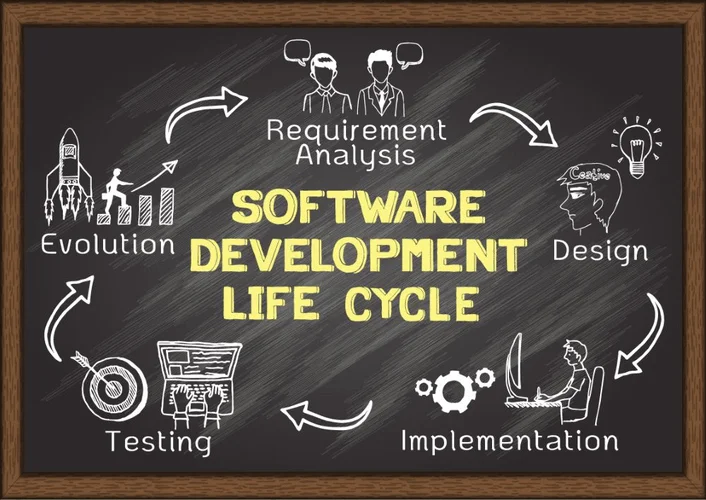

This makes artificial data creation an appealing choice for preliminary prototyping of new features or model exploration of test information sets. There are 4 common methods to create take a look at information for software improvement groups and testing groups within the SDLC. Synthetic information era is the process of making artificial datasets that simulate real-world information with out containing any delicate or confidential info. This method is normally reserved only for when acquiring actual knowledge is difficult (i.e. financial, medical, legal data) or dangerous knowledge (i.e. worker personal information).

Test data administration is a critical element of effective software testing and high quality assurance. By implementing strong take a look at information practices, organizations can improve test coverage, enhance effectivity, and ensure the reliability of their software program products. This consists of lowering defects within the data (such as deceptive edge cases), in addition to processes for ensuring data is correctly secured through means similar to masking, scrambling, or pseudonymization.

Transform Your Business With AI Software Development Solutions https://www.globalcloudteam.com/

When done successfully, product backlog refinement helps the team prepare for upcoming sprints. In the long term, consistent product backlog refinement ensures alignment with the product roadmap and contributes to enhancing the general quality of the product. Productboard can significantly aid in product backlog refinement by providing a centralized platform for groups backlog refinement techniques to collaboratively manage and prioritize backlog gadgets.

Determine Who Attends Backlog Refinement Meetings

Product backlog refinement is the process of reviewing and updating objects within the backlog frequently to enhance project administration. This includes breaking down bigger gadgets into smaller slices, including new objects, eradicating pointless ones, and guaranteeing the present items have clear acceptance criteria. Whether you’re new to product backlog refinement, or your refinement practices want a little…ahem…refinement, this text provides you with a comprehensive overview of the product backlog refinement course of https://www.globalcloudteam.com/. Think of product backlog refinement as tending to a thriving backyard. The backlog acts as the garden mattress, a place where ideas wait to blossom into options, improvements, and fixes. Just like a gardener weeds and prunes for wholesome progress, refinement eliminates pointless tasks and prioritizes the most useful work.

Product Backlog Vs Dash Backlog

You open the fridge and discover you don’t have quite every thing you need to make a full meal because you didn’t take the time to examine and put together the right components. Making dinner simply grew to become far more of a chore than initially anticipated. That means that you already have objects in your backlog, but they may want some info or an update before they’re carried out. A good clarification of the significance of specifying by way of examples from Gojko Adzic. Also, Matt Wynne’s Example Mapping is an efficient way to interrupt down the scope of a big merchandise and identify the vital thing questions you may need to talk about with examples.

Streamline Backlog Refinement Conferences With Jira

Note that the record beneath is not intended to be complete, neither is it a mandatory course of to be followed. We anticipate groups to try it out in follow and over a time frame to experiment with variations and/or add new practices. Since late 2021, groups have been doing massive group refinement numerous times.

Backlog Refinement Vs Sprint Planning

Before the assembly, you should know what’s in the backlog and the context behind probably the most relevant gadgets. You won’t have time in the course of the assembly to debate every merchandise, so focus on something particular, such because the refinement of buckets (e.g. tech debt bucket). Aim to host a refinement session someplace in the midst of a sprint (it must be completed earlier than the subsequent sprint begins, and out of the way of dash reviews/retros, and so forth.). Make it a recurring assembly in your product team’s calendars, so that you just all the time have ample time to research the listing, generate new concepts, and gather relevant materials in advance.

Ensure The Right Individuals Are Taking Part

- The most necessary or pressing PBIs are positioned on the high of the list so the group knows what must be worked on subsequent.

- The best method to decide how usually your team needs Product Backlog Refinements and the way long the conferences ought to last is by gaining experience and making mistakes.

- The following steps will assist you to create a easy and repeatable course of that assembles the proper folks, sets expectations, prioritizes and evaluates items, and defines the next steps.

- Product Owners know that ordering the Product Backlog isn’t one thing they need to do alone.

- Refining the backlog saves time and money by guaranteeing that its objects are ready for growth at the right time.

- Here are two effective strategies used for product backlog grooming.

In order to make your deadline, you could have to sacrifice a number of the ‘good to have’ items within the backlog so as to get the precedence items completed and polished. Because these conferences require the staff to discuss each item intimately, the staff and stakeholders develop a shared understanding of what the work requires and which objects must be prioritized. For an Agile staff to efficiently evaluate the complexity of an merchandise, they must have a shared understanding of the consumer story. User tales are informal explanations of what the feature does from the customer’s perspective.

Backlog Refinement (backlog Grooming): Tips On How To Embed Greatest Practices

The Development Team‘s function is to deliver their technical experience to the desk. They work closely with the Product Owner to completely perceive the requirements of each backlog item, breaking bigger duties into smaller, actionable steps. For an agile team to be efficient and obtain desired market and enterprise goals, it is essential to align their every day work with the strategic objectives of the organization.

Importance Of Using Milestones In Project Planning

This approach will assist reconnect the leaders, business representatives, and subject matter consultants with people who are a lot closer to the present product’s challenge at hand. Navigating the Backlog Refinement process is like charting a course for a successful Agile journey. It’s about bringing clarity, prioritization, and alignment to your Product Backlog, ensuring your staff is ready for every Sprint Planning session. Remember, the objective of a Backlog Refinement meeting isn’t just to undergo the motions, but to guarantee that the Scrum Team is on the identical web page and prepared for the upcoming Sprint. Refine your backlog with collaborative software that your entire staff can access. Software like Asana retains your dash structured, clarifies house owners and deadlines for each task, and makes important details straightforward to search out.

Instead, refine it just-in-time repeatedly as wanted with more particulars as more is discovered. As it moves up the product backlog, we might contemplate addressing who advantages from the feature, what they need, and why they want it and write it as a consumer story. As the person story strikes further up the product backlog, we might think about including acceptance criteria to further clarify the person story. As we uncover more, we measurement it and contemplate splitting it into multiple user tales if it is too large or too complex. During a backlog refinement session or assembly, builders, team leads, and stakeholders work to deal with and prioritize objects in a product’s backlog.

However, if the idea is necessary enough and can actually add value, it will come up again—you can add it to the backlog later. Trying to keep a backup backlog creates an extreme amount of overhead and makes it messy to kind by way of later. Emergent- is for the brand new ideas that ought to be kept adding to the Product Backlog as and when the newer discoveries are being made. This is as a end result of this may be a dynamic course of and emerging ideas are required to maintain the method continuous.

This prioritized backlog then turns into the major focus of the following dash planning session. During these sessions they create a shared understanding of the goals for every merchandise and discuss the order of the objects within the backlog. The definition of accomplished (DoD) is a set of criteria that determines when a PBI is accomplished and ready to be shipped or deployed. It can embody technical, useful, high quality, and consumer acceptance elements that the team and the stakeholders agree on. You ought to review and replace your DoD regularly throughout product backlog refinement to ensure that it reflects the present expectations and standards of your product. You must also make certain that your PBIs are aligned together with your DoD and that they can be verified and validated in accordance with it.

An effective root cause evaluation should be carried out carefully and systematically. It requires the best https://www.globalcloudteam.com/ methods and instruments, as well as leadership that understands what the trouble includes and absolutely supports it. No, Failure Mode and Effects Analysis (FMEA) isn’t a root cause evaluation.

What Are The 5 Whys Approach Of Root Cause Analysis?

Below we’ll cowl a variety of the commonest and most generally helpful methods. Prevent or mitigate any negative influence to the targets by selecting the best solutions. Effective solutions should make a change to how folks execute work process. Root cause evaluation is about digging beneath the surface of a problem. However, as a substitute of on the lookout for a singular “root cause,” we shift your problem-solving paradigm to disclose a system of causes. When utilizing Cause Mapping root trigger analysis root cause analysis meaning, the word root in root cause analysis refers to causes which are beneath the surface.

Why Root Cause Evaluation Is Such A Robust Software

Hasty decision-making might even make a nasty scenario worse.Despite these problems and limitations, RCA stays a strong device for understanding your techniques and enabling preventive asset upkeep. While the strategies and tools empower root cause analysis execution, real-world success also depends on engagement, analysis, and implementation excellence. From manufacturing shop floors to software high quality assurance to overall business productivity, root trigger evaluation crosses features to drive operational positive aspects. Getting ahead of downstream points, FMEA supplies an alternate lens complementary to retrospectively investigating executed failures through other root cause analysis approaches.

How Do You Do A Root-cause Analysis?

When a system breaks or modifications, investigators ought to perform an RCA to fully perceive the incident and what brought it about. Root cause analysis is a step past problem-solving, which is corrective motion taken when the incident happens. Conducting a root trigger evaluation and implementing appropriate solutions helps employers to considerably or fully keep away from the repetition of the identical or related problems and incidents.

Give Consideration To Correcting And Remedying Root Causes Quite Than Just Signs

To perform an effective route cause evaluation, you will need to use a stepwise approach and take the time to achieve an in depth understanding at every stage before moving on. Figure 4 reveals the steps of the structured strategy to root trigger analysis. Although there could be general agreement on the necessary actions, numerous texts on root cause analysis use different numbers of steps inside their fashions. A seven-step course of is used here as a end result of it covers both the evaluation and the implementation and verification of corrective actions.

Key Takeaways On How To Do Root Trigger Analysis

Our resolution is designed to help drive improvements in your enterprise operations. IBM research proposes an strategy to detect abnormality and analyzes root causes utilizing Spark log files. Intelligent asset administration, monitoring, predictive upkeep and reliability in a single platform. An Ishikawa diagram (or Fishbone diagram) is a cause-and-effect style diagram that visualizes the circumstances surrounding a problem. The diagram resembles a fish skeleton, with an extended listing of causes grouped into related subcategories. When people make errors or fail to finish required tasks (for instance, an employee fails to perform regular upkeep on a piece of equipment, causing it to interrupt down).

- Gather data, discuss to people concerned, and confirm the issue’s significance.

- Third, there could also be more than one root cause for a given drawback, and this multiplicity could make the causal graph very troublesome to establish.

- In this way, employers assist scale back the danger of death or harm to workers, the neighborhood, or the surroundings.

- Root cause analyses additionally assist stop the issue from arising again.

Root Cause Investigations And Instruments

Root trigger evaluation is often utilized in proactive management to determine the foundation explanation for a problem, that’s, the issue that was the leading trigger. It is customary to discuss with the “root cause” in singular kind, but one or a number of factors could constitute the root cause(s) of the problem beneath research. Proactive management, conversely, consists of preventing issues from occurring. Many strategies can be utilized for this objective, starting from good practices in design to analyzing intimately problems that have already occurred and taking actions to make sure they never recur. Speed isn’t as necessary here because the accuracy and precision of the prognosis. The focus is on addressing the actual reason for the issue rather than its effects.

Suggestions For Conducting Efficient Rca:

Conducting root trigger evaluation coaching is required for your group to promote security culture and a excessive normal of quality across sites and departments. With cell tools like Training, you can create, take a look at, and deploy mobile programs to guarantee that your groups are within the loop and comply with your standards in process enchancment. As some of the in-depth root cause analysis strategies, the Failure Mode and Effects Analysis or FMEA process makes use of hypothetical “What if? This is greatest applied to determine cause-and-effect relationships that purpose to explain why particular issues happen, including the one you’re coping with. Once the team settles on root causes and has laid out all the small print of the issue, they have to start brainstorming options.

Instead, conduct one-on-one interviews with them to gather information objectively. Invite representatives from related departments, corresponding to engineering or operations, to take part within the RCA exercise to ensure a extra neutral analysis. In this instance, inadequate training for maintenance technicians emerges as the root trigger. Performing the RCA method within the right steps with the 5P fundamentals above can result in correct results in inspecting the foundation trigger. People check with those that are concerned in the incidents (including the engineer, technician, and the final inspector assessing the safety). All you have to do is begin with a problem statement and then ask “Why?

The key level is to get as many solutions down as potential for later evaluation and it’s normally essential to allocate the position of scribe to one team member so as to record the concepts effectively. Successful RCA calls for specialised knowledge and hands-on expertise. Without the best know-how and tools, you’ll solely handle to offer short-term fixes for antagonistic occasions.

Participating in such training courses helps to grasp the importance of figuring out the foundation reason for an issue to make sure it does not recur. In addition, programs help to identify widespread limitations and problems in conducting a RCA. Classify the causes throughout the causal factors that relate to an important moment in the sequence together with the underlying causes. If there are a number of causes, which is usually the case, doc these, dig deeper, ideally so as of sequence for a future selection. Individual actions on the motion plan have to be carried out and signed off as full.

Many of those products also embody features built into their platforms to assist with root cause analysis. In addition, some vendors offer tools that gather and correlate the metrics from other platforms to assist remediate an issue or outage event. Tools that include AIOps capabilities are able to study from prior events to recommend remediation actions sooner or later.

Statistical procedures can definitely be a help in the whole process of RCA. Statistical characteristic choice and root cause evaluation helps to search out the patterns which might predict issues and assists in sorting via all the data generated by all of the shareholders. It is wise to find these variables that really predict, rather than those who individuals solely assume will predict.

Containers today are increasingly being delivered as-a-service and used with ease. This maturity, together with stable features and well-defined APIs, makes containers a super Container Orchestration expertise, a better match for mid-sized IT corporations. Also, managed companies offered around containers could possibly be an opportunity for those keen to invest in a new enterprise unit. The burst in the development of apps during the last decade is driven by cloud-native technologies like DevOps, microservices, containers, orchestrators, and serverless. An software should work the same as supposed in growth, check, and manufacturing environments to obtain success.

How Does Container Orchestration Remedy These Problems?

Now here is the total extent of the variations between traditional deployment vs. virtualization vs. containerization in one image. VMs enable engineers to run quite a few purposes with ideal OSs on a single bodily server to increase processing energy, cut back hardware costs, and reduce operational footprint. Containers and virtual machines are each types of virtualization however are unique approaches. Suitable for workflows requiring complete isolation and security, similar to sandboxing and running legacy applications. Containers on a failed node are quickly recreated by the orchestration tool on one other node. Ideal for constant deployment environments and software dependency isolation.

What Are The Differences Between Pods, Nodes, Clusters, And Containers?

As we found in our Kubernetes in the Wild analysis, 63% of organizations are utilizing Kubernetes for auxiliary infrastructure-related workloads versus 37% for application-only workloads. This means organizations are more and more using Kubernetes not just for operating functions, but additionally as an operating system. Kubernetes consists of clusters, where every cluster has a management plane (one or more machines managing the orchestration services), and a number of employee nodes.

Container Orchestration Platforms

Kubernetes finally runs containers utilizing the same applied sciences shared by developer-oriented platforms like Docker. However, Kubernetes also contains in depth storage, networking, access control, and cloud vendor integration capabilities that makes it ideally suited to the operation of cloud-native apps running in production. Enterprises that must deploy and handle lots of or thousands of Linux® containers and hosts can benefit from container orchestration. Kubernetes is an open-source container orchestration system that lets you manage your containers throughout multiple hosts in a cluster. It is written within the Go language by Google engineers who’ve been engaged on it since 2013 when they launched the first model (v1). It can also be best for big enterprises as it might be overkill for smaller organizations with leaner IT budgets.

Therefore, orchestration can be regarded as an end-to-end workflow automation resolution. To handle Atlas infrastructure by way of Kubernetes, MongoDB provides customized resources, like AtlasDeployment, AtlasProject, AtlasDatabaseUser, and many extra. A customized resource is a brand new Kuberentes object sort supplied with the Atlas Operator, and every of those customized sources characterize and allow management of the corresponding object varieties in Atlas.

This use case most accurately fits environments with primarily autonomous Kubernetes clusters which are sometimes wanted to work collectively. In this Kubernetes use case, for example, an enterprise could have nodes in two public clouds, and even nodes in each personal and public clouds, and use only Kubernetes for orchestration. With its widespread adoption and robust ecosystem, Docker continues to be a driving pressure in the evolution of software program development and IT operations. This enables consistent and repeatable infrastructure deployment, decreasing the chance of configuration errors and ensuring scalability and reliability. Infrastructure as Code (IaC) is a robust strategy to managing IT infrastructure. It entails defining infrastructure in a descriptive language, corresponding to YAML or JSON, and then utilizing automation to provision and handle it.

Kubernetes was designed to deal with the complexity concerned to handle all the unbiased elements running simultaneously inside microservices structure. For occasion, Kubernetes’ built-in excessive availability (HA) characteristic ensures steady operations even within the event of failure. And the Kubernetes self-healing function kicks in if a containerized app or an software part goes down. The self-healing feature can instantly redeploy the app or application component, matching the specified state, which helps to take care of uptime and reliability. Container orchestration involves managing, deploying, and scaling containers in a clustered surroundings.

Kubernetes is an effective fit for on-demand developer environments that permit you to construct and take a look at new adjustments in realistic configurations without requiring dedicated infrastructure to be provisioned. Using Kubernetes, a quantity of builders are in a position to work inside one cluster, creating and destroying deployments as they work on each change. You can integrate Middleware with any (open source & paid) container orchestration tool and use its Infrastructure monitoring capabilities to provide you complete analytics about your application’s well being and status.

- The “container orchestration war” refers to a period of heated competitors between three container orchestration instruments — Kubernetes, Docker Swarm and Apache Mesos.

- Both Kubernetes and Mesos have very massive user bases, but not everyone has moved over to them yet.

- Microservices structure is a distributed approach to constructing software program techniques, the place each element is a separate service that communicates with others by way of well-defined APIs.

- The management airplane supplies a mechanism to implement policies from a central controller to every container.

- You can then elevate your Kubernetes Deployment’s replica depend to roll out new situations of your app onto the extra Nodes.

- Monitoring and logging at this scale, particularly in a dynamic setting the place containers are constantly started and stopped, may be complicated.

It is “explained” by builders and system directors to represent the specified configuration of a system. They pace up each stage of the app creation process, from prototyping to manufacturing. It is simple to implement brand-new versions with brand-new structures in creation, and just as easy to revert to an older model if essential. Due to the transferability of its freeware, DevOps procedures become simpler. Web software and API protection (WAAP) in any buyer environment — all via one built-in platform.

While Kubernetes has become the de facto normal for container administration, many companies also use the technology for a broader range of use instances. Kubernetes is probably one of the most popular container orchestration tools, offering features for managing clusters, deploying applications, and scaling assets. Container orchestration simplifies the management of complex containerized environments and enables efficient utilization of assets.

They are additionally significantly simpler to manage and keep in comparison with VMs, which is a significant profit if you are already working in a virtualized surroundings. System administrators and DevOps can make use of CO to handle massive server farms housing 1000’s of containers. If there were no such factor as CO, every little thing would have to be accomplished manually, and the state of affairs would rapidly turn out to be unsustainable.

So, when you containerize your workloads and determine to keep them on-premise, they’ll run more effectively and use fewer sources whereas maximizing your current investments. Also, migrating to a cloud platform down the road will be a simple transition as containers run the identical means irrespective of where you host them. In brief, an SME may have advantages with containers at every stage of the cloud journey. In broad strokes, the Kubernetes orchestration platform runs via containers with pods (link resides outside of ibm.com) and nodes. A pod operates a quantity of Linux containers and can run in multiples for scaling and failure resistance.

These fundamental capabilities—and the range of supporting options available—makes Kubernetes applicable to just about all cloud computing eventualities where performance, reliability, and scalability are necessary. Container orchestration is a software answer that helps you deploy, scale and manage your container infrastructure. It allows you to easily deploy functions throughout multiple containers by fixing the challenges of managing containers individually. Mesos is a cluster management device developed by Apache that can effectively carry out container orchestration. The Mesos framework is open-source, and may easily present useful resource sharing and allocation across distributed frameworks. It allows resource allocation using fashionable kernel features, similar to Zones in Solaris and CGroups in Linux.

Transform Your Business With AI Software Development Solutions https://www.globalcloudteam.com/

Every time a developer pushes a change to the supply code repository, it performs a build. Jenkins, the renowned open-source automation tool, performs a crucial role in remodeling software development and supply processes throughout numerous industries. Let’s discover some of the key use circumstances of Jenkins and the method it empowers organizations to attain continuous integration, automated testing, and efficient deployment. With Jenkins, organizations can speed up the software program improvement process by automating it. Jenkins manages and controls software program delivery processes all through the complete lifecycle, together with construct, doc, check, bundle, stage, deployment, static code evaluation and rather more what is jenkins software. Because the tool is open-source and extensible, it has empowered the Jenkins group to develop a strong ecosystem of plugins.

Configuration Added To All Jobs

Jenkins mechanically generates a brand new project when a new department is pushed to a source code repository. Other plugins can specify different branches, such as a Git branch, a Subversion department, a GitHub Pull Request, and so on. A pipeline is a set of steps the Jenkins server will execute to finish the CI/CD process’s necessary duties. In the context of Jenkins, a pipeline refers to a set of jobs (or events) connected in a specific order. It is a collection of plugins that enable the creation and integration of Continuous Delivery pipelines in Jenkins.

Researchers Determine Significant Vulnerabilities And Malicious Activities Inside Github

Jenkins is often executed as a Java servlet inside a Jetty utility server, and different Java utility servers, similar to Apache Tomcat, can be used to run it. Jenkins dramatically improves the efficiency of the event process. For example, a command prompt code could additionally be converted into a GUI button click using Jenkins. One might accomplish this by encapsulating the script in a Jenkins task.

⚠ Anti-pattern: Scripting Your Own Deployments With Jenkins

Numerous plugins can be found for specific duties related to version management, supply code administration, construct, testing frameworks, deployment targets, reporting, and extra. This modular strategy also permits organizations to create versatile and extremely custom-made pipelines. Jenkins is a Java-based open-source automation platform with plugins designed for steady integration. It is used to repeatedly create and test software program initiatives, making it simpler for developers and DevOps engineers to combine changes to the project and for consumers to get a brand new construct. It additionally enables you to launch your software repeatedly by interacting with numerous testing and deployment strategies.

Commonly Used Jenkins Plugins That We Help

Jenkins is primarily a device for steady integration (CI) however may additionally be used for steady supply (CD). A versatile platform that is suitable for various software program engineering tasks, it’s primarily used to handle CI/CD pipelines to make sure changes are validated and deployed efficiently. Testkube is a Kubernetes-native testing framework designed to execute and orchestrate your testing workflows inside your Kubernetes clusters. Jenkins has a vibrant growth neighborhood that meets both in-person and online regularly.

The end result has been higher high quality functions and sooner supply — shorter software program merchandise’ time-to-market. A Jenkins Secondary refers to a Java executable operating on a distant server. The Jenkins Secondary server is designed to be compatible with most operating techniques and follows all of the requests from the Jenkins Primary. It executes all the build jobs it receives from the Jenkins Primary server.

It makes the Jenkins server perceive the sequence of steps to be carried out in every stage. This file supplies a way to outline pipelines in a version-controlled and reproducible method, allowing groups to manage their delivery course of alongside their software code. Before Jenkins turned commonplace in software improvement circles, development teams used nightly builds in their software program supply processes. Team members would commit the code they had been engaged on all through the day to the supply code repository, and run them overnight to identify errors.

If a developer is working on several environments, they will need to install or improve an merchandise on each of them. If the installation or replace requires more than 100 steps to complete, will most likely be error-prone to do it manually. Instead, you can write down all the steps needed to complete the activity in Jenkins. It will take less time, and you’ll complete the set up or replace without issue. Jenkins is an open-source CI/CD server that helps automate software program improvement and DevOps processes. In the commit stage, developers use Git, Subversion, Mercurial, and different version control software.

Jenkins facilitates the creation of a well-defined CI/CD pipeline, ensuring order and efficiency all through the software development process. With Jenkins, you presumably can seamlessly integrate version management tools like GitHub, GitLab, and so on, making your codebase organized and accessible. Before a change to the software can be launched, it must go through a sequence of complicated processes. The Jenkins pipeline enables the interconnection of many occasions and duties in a sequence to drive steady integration. It has a collection of plugins that make integrating and implementing steady integration and supply pipelines a breeze. A Jenkins pipeline’s primary characteristic is that every assignment or job depends on another task or job.

- Software corporations might pace up their software program growth course of by adopting Jenkins, which can automate check and build at a excessive tempo.

- You probably have already got Jenkins running to automate the build means of your purposes.

- Add to your Jenkins installation to enable Deployment Automation publish build steps.

- If the test fails, GitHub will stop the PR from being merged and would require an admin to approve and merge it.

- Regardless of what tool you utilize, CI is a fairly standardized process.

- This script will generate a Pipeline job referred to as “test-pipeline” and it configures the job to use a Jenkinsfile stored in a git repository with credentials.

In our instance we are just printing a easy message to the console for every stage. Jenkins is built so that it is extensible over any environment and platform for sooner growth, testing, and deployment. It is more adaptable thanks to its sizeable plug-in library, which allows for creating, deploying, and automating across various platforms. Fortunately, Java is a widely known corporate programming language with a big ecosystem. This supplies Jenkins with a secure foundation upon which it might be built by utilizing basic design patterns and tools. Further, it helps handbook testing where essential without switching environments.

When you set off a build, Jenkins will pull the newest code from the supply code repository and begin the build course of. The construct process will execute the build instructions within the job definition. If the build is profitable, Jenkins will execute the check instructions. If the exams are successful, Jenkins will deploy the software program to a production surroundings. Jenkins Pipeline contains a number of plugins that support the implementation and integration of CI pipelines in Jenkins. This tool suite is extensible and can be utilized to model continuous delivery pipelines as codes, regardless of their complexity.

We just add new TESTMO_URL and TESTMO_TOKEN credentials (as textual content types) and enter our URL and API key. We can then reference these credentials and set them as setting variables in our pipeline config (see above). Why not just write these values on to the Jenkinsfile config? You should never commit such secrets to your Git repository, as everybody with (read) access to the repository would then know your access particulars. Instead, you’ll use the secret/variable administration function of your CI software for this.

This article will discover Jenkins, its options, and how it can facilitate test automation. This pipeline will first build the project utilizing Maven, then test the project, and at last deploy the project. Jenkins X is beneficial no matter your familiarity with Kubernetes, offering a CI/CD course of to facilitate cloud migration. It helps bootstrapping onto your chosen cloud, which is essential for a hybrid setup. Jenkins can be distributed as a set of WAR recordsdata, installers, Docker images, and native packages. Jenkins can be utilized to schedule and monitor the working of a shell script via consumer interface as an alternative of command immediate.

You might have more than one Jenkins server to test code in numerous environments. If that is the case, you need to use the distributed Jenkins architecture to implement continuous integration and testing. The Jenkins server can access the Controller setting, which distributes the workload throughout completely different Jenkins Agents.

Transform Your Business With AI Software Development Solutions https://www.globalcloudteam.com/

Real data is vital for testing applications, which are sourced from production databases and later masked for safeguarding the data. It is crucial that the test information is validated and the resulting test cases give a genuine picture of the production environment when the application goes live. The key advantages of the TDM process are quality data and very good data coverage.

Use our vendor lists or research articles to identify how technologies like AI / machine learning / data science, IoT, process mining, RPA, synthetic data can transform your business. Improving testing has several obvious benefits for organizations, from providing a cost-effective solution to enhancing product reliability. As a result, test data management and what’s important in it are ever-changing. In today’s post, we defined test data management by using a divide and conquer approach. Then we gave you a quick overview of three different tools for your consideration.

Best tools for the Test Data Management:

During provisioning, data is moved into the testing environment. Automated tools provide the ability to enter test sets into test environments using CI/CD integration, with the option for manual adjustment. Copying all production data is often a waste of resources and time. With data slicing, a manageable set of relevant data is gathered, increasing the speed and cost-efficiency of testing.

- We’ve covered the definition of test data management, its importance, types of test data, management strategies, and benefits.

- For instance, it’s not that much of a stretch to say that canary releases are a type of test.

- Is induced by multiple users, the data provisioned for them is consumed rapidly.

- Another obvious standout advantage of getting to grips with data, not just for the test but enterprise-wide.

The most known of said regulation is probablyGDPR, but it’s not the only one. No matter what specific regulations your jurisdiction is under, what matters is that you have to adhere to it. Failing to do so might result in serious consequences, financially and legally-wise. And that’s not even to mention the damage to the company’s reputation.

What are the common types of Test Data?

TDM tools can speed up scenario identification and creation of the corresponding data sets. This is a known fact that automation requires highly stable, predictable data sets compared to manual testing which can easily adapt to a higher degree of variability. Increases in data set size, upstream systems, database instance and data sets makes it difficult to manage the test data. Hence, enterprises need access to test automation strategies that imbibe the principles of test data management. With our state-of-the-art automation testing platform, enterprises can build resilient test data management strategies and implement them for better digital ROI.

Example- a banking system, it will have a CRM system/CRM software, a financial application for transactions, which will be coupled with messaging systems for SMS and OTP. Here, the person analyzing the test data requirement should have expertise in banking domain, CRM and financial https://globalcloudteam.com/ application knowledge and messaging system also. A database administrator creates and runs SQL queries on the database tables to gather the required test data. In case of improper use of such critical and high-risk data, legal action by the customers is definite.

Who should be responsible for test data management within the enterprise?

When picking a TDM tool, organizations must research how well the available tools handle the aforementioned pitfalls and challenges. The same test data is often available to different test teams in the same environment, resulting in data corruption. The testing pyramid is a mental framework that allows you to reason about the different types of software tests and understand how to prioritize between them. Great test data must have other properties besides quality. It doesn’t matter if you have high-quality data if it doesn’t get to your tests when they need it. It’s also essential that test data delivery is automatable and can integrate with the existing toolchain to be incorporated into the CI/CD pipeline.

V. Data subset creation is the most used data creation approach in the test data management process. The other two approaches are usually avoided due to the cost involved and data sensitivity. In simple terms, Test data management , is a test data management definition process which involves management- planning, design, storage and retrieval of test data. TDM ensures that test data is of high quality, appropriate quantity, proper format and fulfils the requirement of testing data in a timely manner.

How Can Enterprises Build the Most Effective Test Data Management Strategy?

The test data should be carefully selected to ensure that the software is thoroughly tested. But there’s no reason to despair because there’s light at the end of the tunnel. And this light is called “artificial intelligence.” In recent years, the number of testing tools that leverage the power of AI has greatly increased. Such tools are able to help teams beat the challenges that get in their way with an efficiency that just wasn’t possible before.

Quality assurance is a time-consuming, costly process – but also necessary for launching functional, user-friendly applications. TDM processes allow for faster error identification, improved security, and more versatile testing compared to the traditional siloed method. Ensures all production data is sufficiently masked before testing, keeping your organization with all privacy regulations.

Write In-depth, Quality Test Cases and Code

The sheer volume of test data that is repeatedly used in the regression suites make it an important focal area from the ROI viewpoint. The right TDM tools can help provision a spectrum of data and ensure continuous ROI in each cycle. Is usually created by using one of the automated processes, either from user interface front-end or via create or edit data operations in the database. These methods are time-consuming and may require the automation team to acquire application as well as domain knowledge. Study, adopt and apply data control access by leverage test data management tools to address data privacy concerns, and resolve usage contention issues.

Testing for lead in water at Illinois day cares: What to know – Chicago Tribune

Testing for lead in water at Illinois day cares: What to know.

Posted: Fri, 12 May 2023 11:00:00 GMT [source]

Using LambdaTest, businesses can ensure that their products have been tested thoroughly and achieve a faster go-to-market. In the same environment, testers modify existing data as per their need. The next tester picks up this modified data and performs another test execution, which may result in a failure that is not due to a code error or defect.

How Do You Manage Test Data?

Software testing data can also be synthetic, which means it’s artificially manufactured to replicate the behavior of real users as accurately as possible. It’s often used to test new features or upgrades before they go live. Data breaches can result in substantial damage to a company’s image, as users will become reluctant to use an application prone to leaks. Test data management implementation helps garner user confidence by both preventing leaks and assuring potential users their data will be kept secure.

Content

An easy method for setting up an automated pipeline is offered by Azure DevOps Build Pipelines. As a result, software development and deployment can be done entirely in the Azure cloud. Continuous Integration – This practice involves the continuous https://globalcloudteam.com/ feedback mechanism between testing and development to make code ready for production as early as possible. It encompasses the configuration management, test, and development tools to mark the progress of the production-ready code.

- Codefresh is the most trusted GitOps platform for cloud-native apps.

- In a nutshell Jenkins CI is the leading open-source continuous integration server.

- The team uses backlog information to prioritize bug fixes and new features on Azure Boards.

- It allows teams to use the same tools and services but hosted on their own servers.

- It’s built on Argo for declarative continuous delivery, making modern software delivery possible at enterprise scale.

Interested in improving your DevOps skills around Azure’s platform? After learning the basics of Azure DevOps, head over to our Microsoft Azure Administrator Exam. This exam will prepare you with the skills you need to manage and administer solutions in Azure, helping you along your way in your career in DevOps. Pending tasks are automatically recalculated when reporting the duration, and solved tasks have their durations reset to zero. Not to mention, you may start for nothing, add up to five users for nothing, and have an unlimited number of stakeholders.

Release management

They may be organized into iterations and regions on different dimensions. Iterations are utilized for planning, whereas areas are used to rationally divide the task. A developer will commit their code after the work item is finished. This outcome initiates a build, which shows that the most recent code will compile or will be ready for deployment. Version controlling helps you to track changes you make in your code over time. As you edit your code, you tell the version control system to take a snapshot of your files.

This allows tools on any platform and any IDE that support Git to connect to Azure DevOps. For example, both Xcode and Android Studio support Git plug-ins. In addition, if developers do not want to use Microsoft’s Team Explorer Everywhere plug-in for Eclipse, they can choose to use eGit to connect to Azure DevOps. Microsoft Excel and Microsoft Project are also supported to help manage work items which allows for bulk update, bulk entry and bulk export of work items.

Reporting

My team uses this solution for the CI/CD deployment, and code check-ins. We are also using Azure Boards for tracking our work, all of the requirements, the backlogs the sprints, and the release planning. We basically maintain what would be the equivalent of our project schedules for various projects.

These include Continuous Integration and Continuous Delivery (CI/CD) tools to perform task automation. On this platform, it performs practices like Build Code, Deploy Code, CI/CD and lots of inbuilt services provided. DevOps enables different teams like development, IT operations, quality assurance, as well as security azure devops services teams to collaborate together for the production of more robust and reliable products. Organizations building a DevOps culture gain the ability to quickly and efficiently respond to customer needs. There is a wide range of DevOps tools available in the market today with almost similar sets of abilities.

Javatpoint Services

This course will show you how Azure DevOps works, how to get started, and tips and tricks on how to get the most out of Azure Boards, Azure Repos, Azure Pipelines, Azure Test Plans, and Azure Artifacts. Once upon a time, there was a Microsoft product called Team Foundation Server . The online version of TFS then became Visual Studio Online, which became Visual Studio Team Services, which is now Azure DevOps.

Jira will provide the tools you need if you know what you need. Artifacts is one of the extensions of Azure DevOps which helps us to create, host, manage, and share packages across the team. Azure Artifacts supports multiple types of packages e.g NPM, Nuget, Maven, Python, etc.. Azure Artifacts are basically a collection/ output of dll, rpm, jar, and many other types of files. Release pipelines are very similar to build pipelines however are for deploying your applications to your servers.

Codefresh: an Azure DevOps Alternative

After that, we use it to continue the process of development and deployment. I work for a telecommunication company that offered television via IPTV. IPTV is an internet protocol television, such as AT&T U-verse or Fios from Verizon.

Azure Boards is a powerful tool for teams looking to optimize their workflows and collaborate more effectively on software development projects, as well as support for Scrum and Kanban. The solution can be best described as a set of development tools and services provided by Microsoft that allows teams to plan, build, test, and deliver software efficiently and effectively. It serves as a comprehensive platform that includes everything from agile project management to continuous integration and delivery. Additionally, a built-in Wiki and reporting capabilities make it a one-stop shop for teams seeking to streamline their development process. Both GitHub and Azure DevOps are focused on Git with an emphasis on the various aspects of a collaborative software development process. Both services provide comprehensive and feature-rich platforms to share and track code and build software with CI/CD.

Essential Operations Manager Skills

Here in Europe, we need anonymous synchronization of all data for testing. We create special applications for creating data for direct tests. Artifacts uses the NuGet Server and supports Maven, npm and Python packages.

Content

A website’s performance is a significant factor in user engagement and retention. Research suggests that if a website takes longer than 3 seconds to load, users leave immediately and don’t return. Website performance is the speed of a web page to download, render, and display in a web browser.

It refers to the time spent sending data from one tracked process to another. So the main difference to keep in mind is that Horizontal scaling adds more machine resources to your existing machine infrastructure. In contrast, Vertical scaling adds power to your current machine infrastructure by increasing power from CPU or RAM to existing machines. If you are facing less demand during the off-season, then you can downscale your network for reducing your IT costs. You can find a detailed guide here on how much does it cost to build a web app. However, the process of how to build a web app does not finish here.

Large Businesses

There is extensive documentation and plenty of handy tools available for developers. ReactJS uses a virtual DOM which means concerned elements are updated when a change is made instead of the entire DOM tree being rewritten. ReactJS uses a one-way Data flow which means changes made to the “child” elements do not affect the “parent” element.

Most scalability issues can be prevented at the initial stage of development. When you start building your product, you need to think about future growth and know the challenges you may face. For example, if you’re building an MVP according to a single-server architecture, you should be ready for shifting to a multi-server architecture at high load system architecture some point. In conclusion, web application architecture plays a vital role in the development and success of any web application. The microservice architecture pattern can be greatly enhanced by using serverless resources. In the microservice development model, application components are separated, enabling more flexibility in deployment.

SCALING BACK-END

Web development best practices strive to separate different architectural layers. You should also keep background jobs separate from the main system. In normal situations, software performance changes as the load grows, and this process https://globalcloudteam.com/ is usually gradual. However, there are situations where the jumps are too high and can unexpectedly cause software failure. The saturation point is the level of load that software can barely handle and at which it starts to malfunction.

I know this third-party vendor changes API calls every few months, breaking my client’s authentication. APIs are the bridge between the web pages and the database. APIs transfer data from data sources to web browsers and vice versa.

DevOps Financial Services

This component is responsible for interpreting user actions in the interface, processing requests and correct responses to them. When building a website that may get a ton of traffic, you want to make sure that you choose a scalable and high-performance database engine. For example, some databases are not designed for highly trafficked websites. A typical website is a large web application that does more than host a few web pages. A website typically involves backend databases, APIs or data access services, web servers and services, backend services, and front-end web pages.

To build a web application, you need to know how it differs from a website. Web application development is creating a platform for interaction purposes. In how to develop a web app, you won’t have to select a platform to build the application.

Persistence layer

There are several challenges to building a scalable web application like the choice of framework, testing, deployment, and even infrastructure needs. As mentioned earlier, a scalable application needs different layers to scale independently rather than as a stack of tightly coupled components. Now, let’s look at some successful applications that leveraged such scalable web architecture.

- However, the hierarchical structure can sometimes make debugging a challenge.

- Building with scalability in mind will help you improve your ability to serve customer requests under heavy load, as well as reduce downtime due to server crashes.

- It is also essential to ensure the web application’s reliability and security.

- Due to the “Test First” approach of TDD, there is rapid feedback to the functionality of applications predefined by test codes.

- The idea was to create an app to predict the success of a song with the help of Artificial Intelligence and user interaction.

- This article guides how to create scalable web applications that can process a huge data stream without unexpected breakdowns.

- Security in scalable web services is mainly about limiting the attack surface.

Node.js is an open-source cross-platform runtime environment developed by Ryan Dahl. It was built on Google Chrome V8 Engine to run network and server-side applications and was released in 2009. Developers use JavaScript to build node.js applications and run them on node.js runtime using Windows, macOS and Linux platforms.

How to design a highload app

It is necessary tohire a dedicated software development teamwith extensive expertise in creating scalable software to follow this principle. In the first case, the load is reduced by processing certain parts of requests through the network and creating a reliable data recovery system. In the second – thanks to the distribution of data through several databases and adding more nodes with an increase in the user flow. Database management is vital to product scalability and performance.

For example, if a node has a health check that is critical then all services on that node will be excluded because they are also considered critical. Node_ttl – By default, this is “0s”, so configuration components all node lookups are served with a 0 TTL value. DNS caching for node lookups can be enabled by setting this value. This should be specified with the “s” suffix for second or “m” for minute.

Colorado Disability Funding Committee Releases Casa Bonita … – Colorado.gov

Colorado Disability Funding Committee Releases Casa Bonita ….

Posted: Mon, 15 May 2023 07:00:00 GMT [source]

In order to change the value of these options after bootstrapping, you will need to use the Consul Operator Autopilotcommand. For more information about Autopilot, review the Autopilot tutorial. Scaleway SD configurations allow retrieving scrape targets from Scaleway instances and baremetal services.

Example Docker Config

# Initial connection timeout, used during initial dial to server. # Comma-separated hostnames or IPs of Cassandra instances. # Directory where the WAL data is stored and/or recovered from.

- Runtime config files will be merged from left to right.

- To explicitly link to the time zone database in versions of MongoDB prior to 5.0, 4.4.7, and 4.2.14, download the time zone database.

- The ping time, in milliseconds, that mongos uses to determine which secondary replica set members to pass read operations from clients.

- GCE SD configurations allow retrieving scrape targets from GCP GCE instances.

- For incoming connections, the server accepts both TLS and non-TLS.

- Send the x.509 certificate for authentication and accept only x.509 certificates.

- One use for this is ensuring a HA pair of Prometheus servers with different external labels send identical alerts.

Some of these settings may also be applied automatically by auto_config or auto_encrypt. Ui_config – This object allows a number of sub-keys to be set which controls the display or features available in the UI. Configuring the UI with this stanza was added in Consul 1.9.0. Disable_hostnameThis controls whether or not to prepend runtime telemetry with the machine’s hostname, defaults to false. Circonus_api_token A valid API Token used to create/manage check.

Overriding Individual Options

# Period with which to poll DNS for memcache servers. Common configuration to be shared between multiple modules. If a more specific configuration is given in other sections, the related configuration within this section will be ignored. # Signature Verification 4 signing process to sign every remote write request. # The default tenant’s shard size when shuffle-sharding is enabled in the ruler.

Min_quorum – Sets the minimum number of servers necessary in a cluster. Autopilot will stop pruning dead servers when this minimum is reached. Max_trailing_logs – Controls the maximum number of log entries that a server can trail the leader by before being considered unhealthy. When accessing this hidden route, you will then be redirected to the / route of the application. Once the cookie has been issued to your browser, you will be able to browse the application normally as if it was not in maintenance mode.

Testing Type-Specific Options

The configuration is used to change how the chart behaves. There are properties to control styling, fonts, the legend, etc. – define whether you want to perform any specific actions before launching the application, for example, compile the modified sources or run an Ant or Maven script. Create from a template or copy an existing configuration.

Promtail saves the last successfully-fetched timestamp in the position file. If a position is found in the file for a given zone ID, Promtail will restart pulling logs from that position. When no position is found, Promtail will start pulling logs from the current time.

How to use configuration in a sentence

Select the staging environment using the APP_ENV env var as explained in the previous section. After deploying to production, you want that same application to be optimized for speed and only log errors. You must use processManagement.windowsService.servicePassword in conjunction with the–install option. You must use processManagement.windowsService.serviceUser in conjunction with the–install option. You must use processManagement.windowsService.description in conjunction with the–install option. The name listed for MongoDB on the Services administrative application.

Enable or disable the validation checks for TLS certificates on other servers in the cluster and allows the use of invalid certificates to connect. For clients that don’t provide certificates, mongod ormongos encrypts the TLS/SSL connection, assuming the connection is successfully made. Starting in MongoDB 4.0, you cannot specifynet.tls.CRLFile on macOS. See net.ssl.certificateSelector in MongoDB 4.0 and net.tls.certificateSelector in MongoDB 4.2+ to use the system SSL certificate store. On Linux/BSD, if the private key in the x.509 file is encrypted and you do not specify the net.tls.clusterPassword option, MongoDB will prompt for a passphrase.

Using other Configuration Languages

Your system must have a FIPS compliant library to use the net.ssl.FIPSMode option. When using the secure store, you do not need to, but can, also specify thenet.ssl.clusterCAFile. On macOS, if the private key in the x.509 file is encrypted, you must explicitly specify the net.ssl.clusterPasswordoption.

The jobs will continue to be handled as normal once the application is out of maintenance mode. Your maintenance mode secret should typically consist of alpha-numeric characters and, optionally, dashes. You should avoid using characters that have special meaning in URLs such as ?. Retrieve a default value if the https://globalcloudteam.com/ value does not exist…

Vocabulary lists containing configuration

On macOS, if the private key in the PEM file is encrypted, you must explicitly specify the net.ssl.PEMKeyPassword option. Alternatively, you can use a certificate from the secure system store (see net.ssl.certificateSelector) instead of a PEM key file or use an unencrypted PEM file. With net.ssl.sslOnNormalPorts, a mongos or mongod requires TLS/SSL encryption for all connections on the default MongoDB port, or the port specified bynet.port. Enable or disable the use of the FIPS mode of the TLS library for the mongos or mongod.